Last Updated January 30, 2012

Note: The code used in these notes is adapted from the excellent shadow mapping tutorial at Riemer's XNA Tutorials. The code in the example projects linked to from this page have been somewhat simplified & modified from the originals in order to better highlight the key techniques.

Each Image is linked to the project that created it.

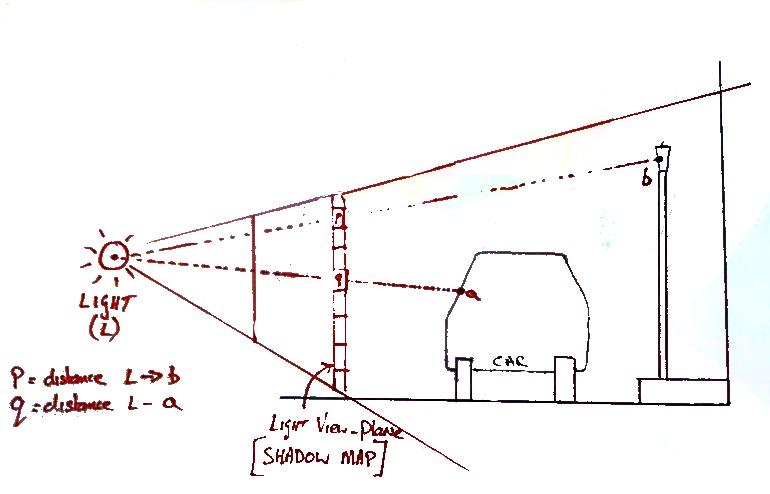

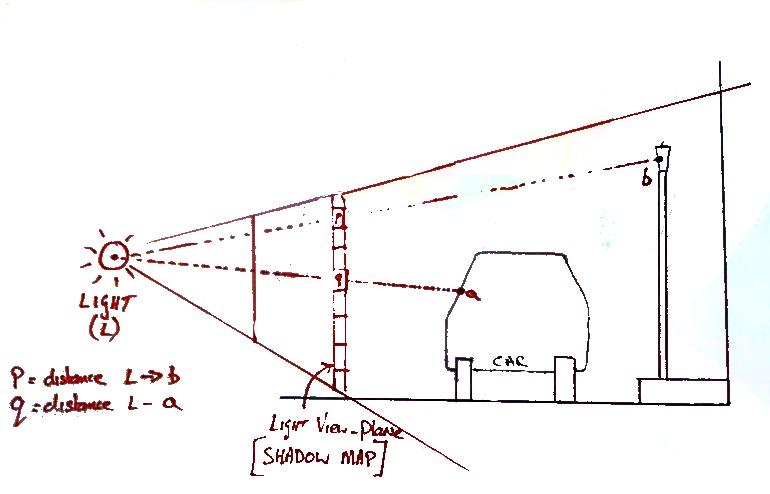

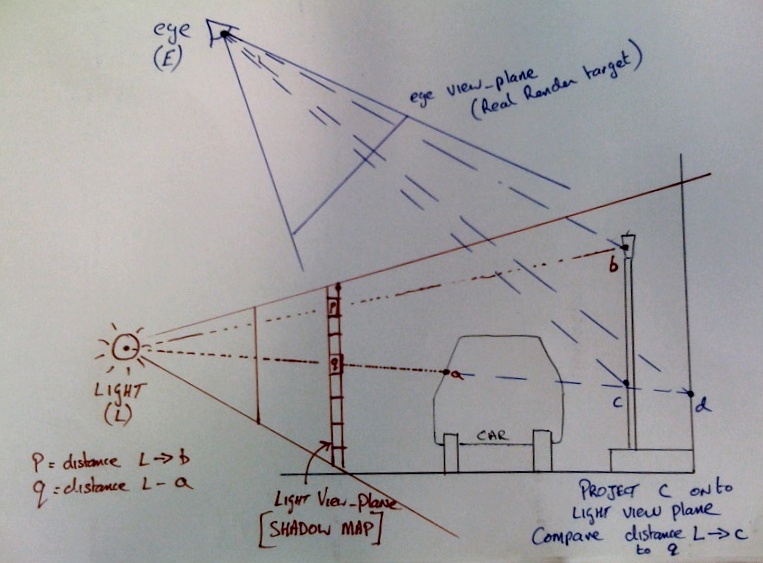

The shadow mapping techniques leverage the existing infrastructure of the z-buffer. This method follows directly from the idea that shadow points are "hidden" from light. In other words, shadows are "hidden surfaces" from the point of view of a light. If we pretend that the light point is the center of projection (i.e. an eye point), we can render the scene from the light's point of view, using a Z-buffer to compute surfaces visible to the light. The Z-buffer resulting from this will record all of the points that are closest to the light. Any point that has a "farther" Z value at a given pixel is invisible to the light and hence is in shadow.

Note that when we are calculating the hidden surfaces from the point of view of each light source, we only care about the depth information, and we are not interested in performing lighting calculations for these polygons, because the "light's eye views" will not normally be seen by the user. This permits faster rendering when pre calculating the shadow Z-buffers.

The scene rendered from the point of view of the light source in not shown to the user, instead it is stored as a texture for use in the next phase of the scene processing. This texture is called the shadow map.

While the scene is being rendered from the camera's point of view, each pixel's distance from the light source is measured. If the pixel's distance is GREATER than the corresponding distance in the shadow map, this means that the pixel is invisible to the light (is hidden by another object) and is therefore in shadow, so the appropriate lighting calculation can be made.

In principle this is a simple and elegant procedure,however in practice there are complications and problems associated with;

We start with a scene without any shadowing;

In this scene a light source is placed on the left near the ground. We would expect that the car and lamppost would cast a shadow on the wall.

The first phase of shadow mapping involves creating a shadow map for each light source. The shadow map is a projection of the scene from the position of the light source. The shadow map is not coloured using materials, textures and lighting (as in a normal rendering), but instead it is coloured depending on the distance of a point to the light source (smaller distance = smaller number='darker pixel'). Shadow map for this scene is below.

This application generates a shadow map for the scene and renders it to the user. [This rendering is for demonstration purposes only, the user would normally not be exposed to the shadow map]. The rendering is done by moving the camera to the light position, pointing the camera to the car and rendering the scene almost as normal. The only important change is in the pixel shader. Instead of using a lighting model to calculate the pixel colour, the distance of the pixel from the camera (light) determines the colour.

float4x4 xWorldViewProjection;

float4x4 xLightWorldViewProjection;

float4 xLightPos;

float4x4 xWorldInv;

Texture xColoredTexture;

sampler ColoredTextureSampler = sampler_state { texture = ; magfilter = LINEAR; minfilter = LINEAR; mipfilter=LINEAR; AddressU = mirror; AddressV = mirror;};

Texture xShadowMap;

sampler ShadowMapSampler = sampler_state { texture = ; AddressU = clamp; AddressV = clamp;};

//------- Technique: ShadowMap --------

struct SMapVertexToPixel

{

float4 Position : POSITION;

float4 PositionForPS : TEXCOORD0;

};

struct SMapPixelToFrame

{

float4 Color : COLOR0;

};

SMapVertexToPixel ShadowMapVertexShader( float4 inPos : POSITION)

{

SMapVertexToPixel Output = (SMapVertexToPixel)0;

Output.Position = mul(inPos, xLightWorldViewProjection);

Output.PositionForPS = Output.Position; // make a copy to send to pixel shader

return Output;

}

SMapPixelToFrame ShadowMapPixelShader(SMapVertexToPixel PSIn)

{

SMapPixelToFrame Output = (SMapPixelToFrame)0;

Output.Color = PSIn.PositionForPS.z/PSIn.PositionForPS.w;

return Output;

}

technique ShadowMap

{

pass Pass0

{

VertexShader = compile vs_2_0 ShadowMapVertexShader();

PixelShader = compile ps_2_0 ShadowMapPixelShader();

}

}

As discussed previously, the shadow map is should not displayed on screen, but instead needs to be stored in a texture. This is done by creating a new render target. A render target is a region of video memory used for drawing, similar to the back buffer. A user created render target is not involved in the buffer swap process and can have different resolution, size and colour depth than the actual display surface.

A Rendertarget is a subclass of Texture2D so the image produced can be send to the shader as a normal texture.

During the Draw() function of our game we can instruct XNA to change render targets.

PresentationParameters pp = GraphicsDevice.PresentationParameters; shadowMapRenderTarget = new RenderTarget2D(GraphicsDevice, 512, 512, false, SurfaceFormat.Single, pp.DepthStencilFormat); GraphicsDevice.SetRenderTarget(shadowMapRenderTarget);

See here for details of the code to create and set render targets and copy render target to a texture.

The shadow map is applied while processing each pixel as the scene is being rendered to the back buffer from the camera position. The trick to applying the the shadow map is to find the texture coordinates within the shadow map for a pixel (done in the pixel shader).

Find the texture coordinates by projecting the vertex using the Light's projection/view matrix. This value is passed to the pixel shader as a texture coordinate semantic. (As per usual, we also need to project the vertex using the usual world-view-projection matrix, to determine the vertices' projected position.)

SSceneVertexToPixel ShadowedSceneVertexShader( float4 inPos : POSITION, float2 inTexCoords : TEXCOORD0, float3 inNormal : NORMAL)

{

SSceneVertexToPixel Output = (SSceneVertexToPixel)0;

Output.Position = mul(inPos, xWorldViewProjection);

Output.Position3D=inPos;

float4 vertexPosInLightSpace=mul(inPos, xLightWorldViewProjection);

Output.ShadowMapSamplingPos = vertexPosInLightSpace;

Output.Normal = inNormal;

Output.TexCoords = inTexCoords;

return Output;

}

In the pixel shader, the coordinates of the pixel projected on to the lights' view plane need to be converted from homogeneous clip space into texture coordinates;

divide by w to get clip space coordinates

Xclip=XProj/w

Yclip=Yproj/w

Then need to scale from a range of [-1..1] to [0..1] for a texture coordinate

Xtexture=Xclip/2 +0.5

Ytexture=Yclip/2 +0.5

these texture coordinates are then used to look-up the shadow map for that pixel. The shadow map contains the distance from the light along the ray to the nearest object to the light.

SScenePixelToFrame ShadowedScenePixelShader(SSceneVertexToPixel PSIn)

{

SScenePixelToFrame Output = (SScenePixelToFrame)0;

float2 ProjectedTexCoords;

ProjectedTexCoords[0] =( PSIn.ShadowMapSamplingPos.x/PSIn.ShadowMapSamplingPos.w)/2.0f +0.5f;

ProjectedTexCoords[1] = (-PSIn.ShadowMapSamplingPos.y/PSIn.ShadowMapSamplingPos.w)/2.0f +0.5f; //zero y-coord in in a texture is the top, so reverse coordinates

bool inShadow = false;

bool outsideShadowMap= true;

float StoredDepthInShadowMap=1;

// first check if the texture coordinates lies in the range [0..1]

if ((saturate(ProjectedTexCoords.x) == ProjectedTexCoords.x) && (saturate(ProjectedTexCoords.y) == ProjectedTexCoords.y))

{

outsideShadowMap=false;

//check if in shadow

...

}

//if in shadow, do appropriate lighting

This application renders the scene from the point of view of the camera, but uses the looked-up shadow map value to color each pixel. Details of the code are found here. In the next (final) phase we will use this information to determine if pixels are in shadow. [in the image below, pixels which fall outside the shadow map are deliberately tinted red, note that the pixels which lie outside the shadow map inherit the value of the nearest edge value due to clamping of the texture sampler]

The final stage is to figure out if a pixel is in shadow. This is done by comparing the distance (d) to the light source to the distance in the corresponding part of the shadow map (m).

If d> m then the point is in shadow and the lighting model should take this into account.

Here is a shader which will correctly render the shadows. [It is a simplified version of the one discussed here].

//------- Technique: ShadowedScene --------

// first check if the texture coordinates lies in the range [0..1]

if ((saturate(ProjectedTexCoords.x) == ProjectedTexCoords.x) && (saturate(ProjectedTexCoords.y) == ProjectedTexCoords.y))

{

outsideShadowMap=false;

// get depth from shadow map

StoredDepthInShadowMap = tex2D(ShadowMapSampler, ProjectedTexCoords).x;

// compre shadow-map depth to the actual depth

// subtracting a small value helps avoid floating point equality errors (depth bias)

// when the distances are equal

float pixelDepth=PSIn.ShadowMapSamplingPos.z/PSIn.ShadowMapSamplingPos.w;

if ((pixelDepth-0.01) > StoredDepthInShadowMap && pixelDepth < 1.0 )

{

inShadow=true;

}

}

// retrive color from texture map (regular texture map)

float4 ColorComponent = tex2D(ColoredTextureSampler, PSIn.TexCoords);// from texture map

// ambient light

Output.Color = ColorComponent*0.2;

float DiffuseLightingFactor=0;

if(!inShadow){// in bright light, add light from light source

// calculate diffuse lighting

DiffuseLightingFactor =

saturate(DiffuseReflection(xLightPos, PSIn.Position3D, PSIn.Normal));

}

Output.Color +=ColorComponent*DiffuseLightingFactor;

}

Below is a an extract from this application.

private void DrawScene(string technique)

{

effect.CurrentTechnique = effect.Techniques[technique];

effect.Parameters["xWorldViewProjection"].SetValue(Matrix.Identity * viewMatrix * projectionMatrix);

effect.Parameters["xColoredTexture"].SetValue(StreetTexture);

effect.Parameters["xLightPos"].SetValue(LightPos);

effect.Parameters["xLightPower"].SetValue(LightPower);

effect.Parameters["xWorld"].SetValue(Matrix.Identity);

effect.Parameters["xLightWorldViewProjection"].SetValue(Matrix.Identity * lightViewProjectionMatrix);

effect.Parameters["xShadowMap"].SetValue(texturedRenderedTo);

effect.Begin();

foreach (EffectPass pass in effect.CurrentTechnique.Passes)

{

pass.Begin();

device.VertexDeclaration = new VertexDeclaration(device, myownvertexformat.Elements);

device.Vertices[0].SetSource(vb, 0, myownvertexformat.SizeInBytes);

device.DrawPrimitives(PrimitiveType.TriangleStrip, 0, 18);

pass.End();

}

effect.End();

Matrix worldMatrix;

worldMatrix = Matrix.CreateScale(4f, 4f, 4f) * Matrix.CreateRotationX(MathHelper.PiOver2) * Matrix.CreateRotationZ(MathHelper.Pi) * Matrix.CreateTranslation(carPos);

DrawModel(technique, CarModel, worldMatrix, CarTextures, false);

worldMatrix = Matrix.CreateScale(0.05f, 0.05f, 0.05f) * Matrix.CreateRotationX((float)Math.PI / 2) * Matrix.CreateTranslation(4.0f, 35, 1);

DrawModel(technique, LamppostModel, worldMatrix, LamppostTextures, true);

worldMatrix = Matrix.CreateScale(0.05f, 0.05f, 0.05f) * Matrix.CreateRotationX((float)Math.PI / 2) * Matrix.CreateTranslation(4.0f, 5, 1);

DrawModel(technique, LamppostModel, worldMatrix, LamppostTextures, true);

}

protected override void Draw(GameTime gameTime)

{

//Create shadow map

device.SetRenderTarget(0, renderTarget);

device.Clear(ClearOptions.Target | ClearOptions.DepthBuffer, Color.Black, 1.0f, 0);

DrawScene("ShadowMap");

device.ResolveRenderTarget(0);

//copy shadow map to texture

texturedRenderedTo = renderTarget.GetTexture();

// render scene

device.SetRenderTarget(0, null);

device.Clear(ClearOptions.Target | ClearOptions.DepthBuffer, Color.Black, 1.0f, 0);

DrawScene("ShadowedScene");

// draw shadow map in top-left corner

SpriteBatch sprite = new SpriteBatch(device);

sprite.Begin(SpriteBlendMode.None, SpriteSortMode.Immediate, SaveStateMode.SaveState);

sprite.Draw(texturedRenderedTo, new Vector2(0, 0), null, Color.White, 0, new Vector2(0, 0),0.6f , SpriteEffects.None, 1);

sprite.End();

// draw text

spriteBatch.Begin(SpriteBlendMode.Additive, SpriteSortMode.Deferred, SaveStateMode.SaveState);

string output = "Use Cursor Keys to move light or car position";

Vector2 FontOrigin = Vector2.Zero;

// Draw the string

spriteBatch.DrawString(Font1, output, FontPos, Color.LightGreen,

0, FontOrigin, 1.0f, SpriteEffects.None, 0.5f);

spriteBatch.End();

base.Draw(gameTime);

}

}

}

The distance of objects from the light source can vary continuously, however, a light map can only store distance with a limited precision. Because of this, a depth bias value has to be subtracted from every computed distance when comparing the depth in the shadow map to account for the limited precision.

Too small a depth bias will cause objects to be shadowed when the are not, too large a depth bias will cause objects to be lit when they are a short distance behind a shadowing object.

This effect is further exaggerated by the fact that the depth values are not linearly distributed by perspective projection, depth values of the far part of the view volume are far more spread out.

One solution is to experimentally choose a suitable depth bias for a given scene, another is to use all the channels in the shadow map to store distance to a higher precision, finally the projected distance can be remapped to provide a linear distribution of depth values

Image above shows effect of too small a bias value

A shadow map is a texture, and sampling any texture can cause severe aliasing effects. One paticular effect is very noticable when either the shadowmap resolution is too small or the light source is relatively distant from the objets being lit, the shadows take on a very pixelated appearance. This can be overcome somewhat, buy sampling the shadowmap using bilinear filtering, but this merely has the effect of smoothing out the "stair-case" effect, but still leaves a sharp shadow edge and has no real impact on extreme aliasing.

Aliasing due to low shadowmap resolution

One way to remove these artefacts is to employ "percentage closer filtering" (PCF).

PCF lead to a bluring of the shadow edges (link to Shader file)

© Ken Power 1996-2016